There are two ways to run accessibility testing on your websites and applications: automated, rules-based testing and manual, expert analysis. The scan and the audit. Automated testing tends to be the holy grail for many organizations as it is lower cost and requires less expertise. It also has a broader reach, capable of analyzing thousands of pages and components with a single scan. Manual testing, on the other hand, is the only way to gain a complete picture of your compliance. Relying on automated testing alone leaves a large number of accessibility criteria unchecked or not fully checked, so only a partial view is obtained.

For instance, an automated rules engine can find images that lack alternative text descriptions, and this includes most of the possible use cases, from an ‘alt’ attribute to an ARIA attribute. However, the automated tool cannot assess whether the alt text is a good and concise description of the image. For this, you need a human tester.

Most organizations cannot afford to audit their online resources constantly. An audit can take around ten days to test twenty screens and components; this work is often left to a core accessibility team which quickly gets overloaded. This is where automated testing comes to the rescue. Through scanning, you can identify where the risk is greatest, what to prioritize, and where to focus your manual testing efforts.

This process always starts with a discovery phase, where you scan a cross-section of all your assets and compare their performance. ARC Monitoring includes different layers of automated analysis. A broad sweep with our domain monitoring and a more focused analysis with user flows.

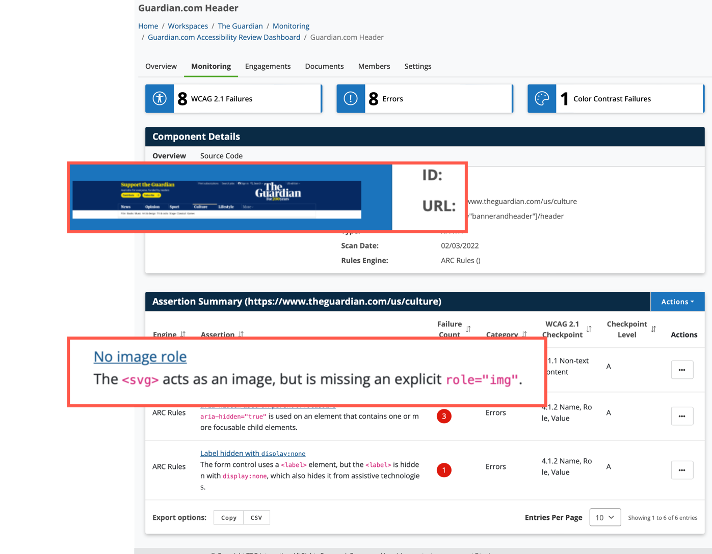

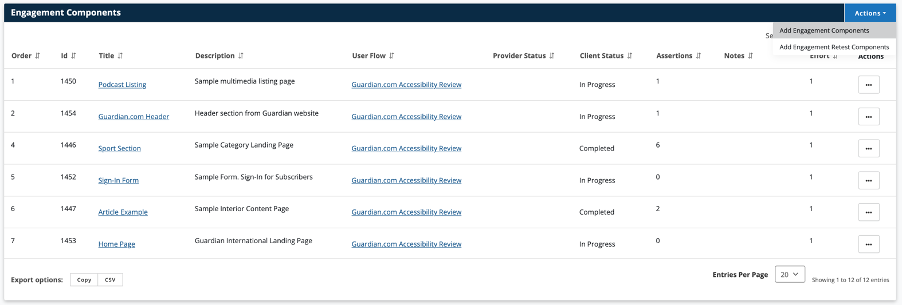

In the scenario in the screenshot above, repeated testing of a domain has revealed accessibility issues in the header component of a website. The header component is then combined into a user flow where it can be analysed separately from the rest of the page (user flows can scan interactions as well as specific elements on a page). The same is done with other templates and components that show a large number of accessibility defects. These then become a sample test set that can be run through a manual audit. The audit will reveal not just what is going on with those elements, but what is happening in the wider site.

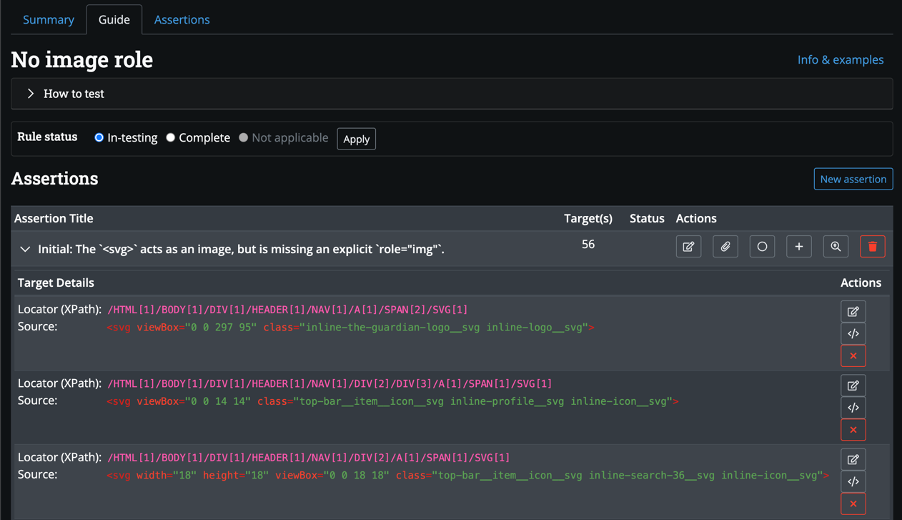

Automated testing can also be integrated into the audit process to make it more efficient. For example, in ARC Capture, a new browser extension available with TPGi’s ARC Provider Package (which supports most modern browsers), testers can use the same automated rules to quickly assess dozens of WCAG criteria which they can then manually verify. Also, when they raise an issue in ARC Capture, automated tests can be used to log all the instances of that issue in the component. In the screenshot example below, the tester has found SVG images without an appropriate role attribute. The automated engine has detected and flagged all the cases, along with their location in the code. This saves the tester the time it takes to find and log each item.

Challenges with Manual Testing

One of the challenges with manual testing is getting consistency between a variety of testers. There are often nuances in the way issues are interpreted and reported. With ARC Capture, testing is guided along a clear path. Everything the tester needs, from the test plan to the details of each component, is available in the tool along with full instructions on how to run the tests and templated content managed by the TPGi Knowledge Center. Writing is probably the biggest effort in any manual analysis, but in ARC Capture, testers can load templates and match issues to previous results so that they are reporting them in the same way, with consistent language.

All results logged in ARC Capture are automatically published to the ARC customer portal, where they are available in the same workspaces as the automated dashboards. This supports one further use of automated testing. While your teams remediate the defects found during the audit, you can monitor their progress with scheduled scanning. If the number of defects is going steadily down, it’s a good sign, if it is not, or it is increasing, you might need to intervene, read the report again or invest in some training. Once all the issues are resolved, the scheduled monitoring will tell you when it is time to do a final retest. After that, it is a case of watching those same tested components with monthly scans to ensure no new issues creep in and the accessibility is maintained.

By using automated and manual testing in this way, you can cut down on labour and spread the work throughout your organization and away from your overburdened accessibility team.

The new ARC Provider Package is built on top of the ARC Platform and uses the same ARC rule set, the ARC Rules. It is aimed at Enterprise organizations who have reached the highest level in the accessibility maturity lifecycle and want to provide accessibility services (especially audits) to their own product and website teams.

In ARC Provider Portal, you can set up manual tests, build test plans and publish reports. It allows for a division of labour, with one group managing the audit project while another carries out the testing and logs the results in ARC Capture. For example, an accessibility team within an Enterprise can use ARC Provider Portal to set up audits and then have product teams do their own manual testing using the ARC Capture extension. There is even a built-in QA process enabling expert testers to check the work of less experienced teams.

The goal is to shift-left and push accessibility to deeper parts of the development pipeline leaving accessibility teams free to coordinate wider enterprise accessibility and monitor the whole organization using automated scans. Combining all these tools and techniques, automated and manual in one place, results in big cost savings and improved efficiency.

If you are searching for a single solution to streamline audits and communication across your team, schedule a demo to learn more about ARC Provider Package.