We’re back on Day Two of the Web Directions Summit 2023 conference in Sydney, Australia, looking forward to another packed day of what’s on the web technology horizon.

If you haven’t already, catch up on Day One, and join us as we look for new insights into digital accessibility, artificial intelligence, and the intersection of the two.

Day Two Opening Keynote: Steam Engine Time

Mark Pesce, broadcaster and futurist

The title of Mark’s talk refers to his proposition that we are still in the early days of AI, and its benefits and limitations are analogous to those we experienced in the age of steam power. Having started his talk by making clear that there really is no such thing as “artificial intelligence” – by definition intelligence cannot be artificially derived – Mark explained how human consciousness and cognition evolves from birth to become more complex and refined over time.

He suggested that what we call AI still has a great deal of evolution ahead of it, not unlike how clumsy – albeit revolutionary – steam power was in its day before giving way to more complex, streamlined and effective technologies.

My takeaway was that there is opportunity ahead to manage the evolution of AI and its role on the web and in day to day life, if we approach it without fear but with care and caution.

Design + AI = Good or Bad?

MC Monsalve & Phil Banks, Product Design Team Lead & Design Lead, ABC iView

MC and Phil focused on how the principles of AI could support the ABC, Australia’s national radio and television broadcaster, in enhancing user experience. With its multiple outlets in free-to-air national TV broadcasting, live streaming, video on demand, national and local radio broadcasting, podcasting, and apps, the ABC carries a responsibility as a publicly funded entity to provide content in metropolitan, regional and remote parts of Australia to all ages from the very young to the very old, but to do this in a way that satisfies the customized and personalized demands of its individual users. AI is a necessary and usable tool to meet these demands. It is being used now in various ways and its use will continue to grow. It’s therefore important to ensure that AI is used accurately, ethically and responsibly, and the ABC aims to be a leader in this.

My takeaway was that the ABC accepts the responsibility of the national public content network to ensure that AI is used to the overall benefit of its users in every way.

Experimenting with AI at the ABC

Anna Dixon, Senior Service Designer, ABC

Anna explained the activities of the ABC’s Innovation Lab in exploring how AI is being applied to its products. Anna gave examples that focused on accessibility, such as AI-generated transcripts of radio and television presentations and synthesized speech generated from text by AI, as well as more general ways that AI is being used to enhance and customize user experience, such as automated language translation in both text and voice. While user feedback has been very positive regarding these innovations, Anna noted that in most cases, an element of human intervention is still required in the form of checking and editing.

In the Q&A, I asked about other ways in which the Innovation Lab was aiming to enhance accessibility for people with disabilities, and Anna responded with enthusiasm that the Lab is actively exploring the potential for AI image recognition to produce automated audio description and the production of AI-generated automated captions, among other things. She emphasized that their user research included people with disabilities, and reiterated the need for human intervention for accuracy, bias, and quality assurance.

My takeaway was that AI can be used to improve broad user experience, and to allow greater customization to individual user preferences, including to enhance digital accessibility for people with disabilities.

Conversational AI Has a Massive, UX-Shaped Hole

Peter Isaacs, Senior Conversation Design Advocate, Voiceflow

Peter focused on the need to improve the way AI handles conversation, clunky and stilted chatbots being a prime example. Peter sees UX practitioners as the key to making AI voice interfaces more human-like and natural, bringing empathy and user research to the task of training voice AI. At the same time, UX designers need to engage with and understand AI and ML, creating a new skill set around Conversational Design.

In the Q&A, I asked about how, given the apparent “black box” process of using AI, UX practitioners could insert themselves into the process of generating conversational AI, and Peter responded that it has to be at both ends of the process, in influencing the material on which AI is trained before the generation process, and being able to affect the output before it’s made available to humans.

My takeaway was that Conversational AI has significant implications for digital accessibility, with the potential to make user interfaces more accessible to some people with disabilities, and less accessible for others.

Baking Accessibility into Your Design System

Simon Mateljan, Design Manager, Atlassian

Simon gave an overview of how a design system can be constructed and developed to meet digital accessibility needs, and how this is not something that can be applied on top of a design system but needs to be baked in from the start. Simon described how the Atlassian design system applied to a large range of products, which demands a design system that is both consistent and respects individual product specifications. It inevitably requires some education and explanation for its users, and needs to allow for ongoing refinement over time, while remaining true to brand requirements as well digital accessibility standards.

My takeaway was that it is possible to create an accessible design system for a large company with a diverse range of products, if the company chooses to set that as an aim and supports the work required.

WCAG 2.2 – What it Means for Designers

Julie Grundy & Zoë Haughton, Senior Digital Accessibility Consultants, Intopia

Julie and Zoë walked us through the changes to the Web Content Accessibility Guidelines in version 2.2, explaining what each new success criterion is, what it focuses on, and how to test for it. Julie and Zoë explained the success criteria in language designers could understand and provided practical examples.

In the Q&A, I asked about how to manage attitudes from designers that, for example, the new Focus Not Obscured success criteria were redundant because their issues were already covered by Focus Visible. Julie responded that accessibility consultants need to convey that the new success criteria are refinements in addition to Focus Visible, allowing identification of more specific issues and techniques to remediate them. They agreed that this was also an education issue for more experienced accessibility engineers, but noted that ultimately the goal is to fix content accessibility problems and whether that’s done under one SC or another is less important than that they are fixed.

My takeaway was that WCAG 2.2 aims to build on WCAG 2.1 and improve accessibility guidance on issues that relate to users with cognitive disabilities, low vision users, and touch screen interfaces.

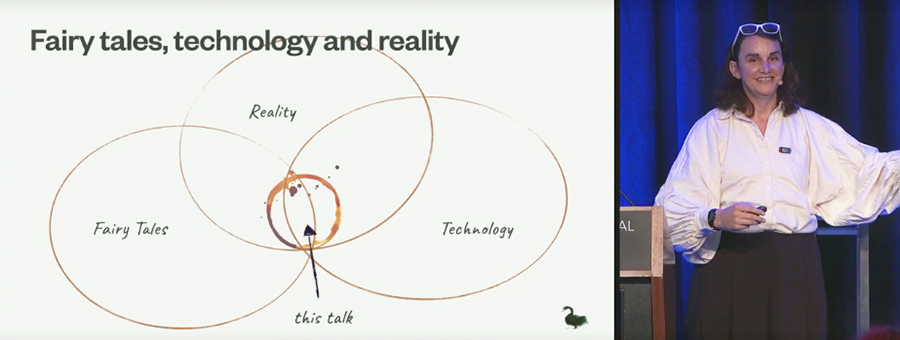

Day Two Closing Keynote: All the Things That Seem to Matter

Tea Uglow, Director, Dark Swan Institute

Tea’s talk was an over-arching view of how web technology has evolved and what the future might hold, seen through the lens of a technologist who founded Creative Labs for Google in Sydney and London and has become noted for her work in the intersections of technology, arts and culture. Tea walked us through an anecdotal timeline that gave a perspective on the history of web technology, informed by both her work and personal life, including her gender transition and her LBGTQ advocacy and activism.

Her reflections were deeply incisive and forthright (expletives, ribaldry, and possible libel included), pitching her own experience of how obstructionist working in web tech can be against the ongoing potential for inclusion, liberation and creative development.

My takeaway was that the history of web technology is filled with contradictions, frustrations and creative breakthroughs, and what its future holds largely depends on us, the people who build the web, individually and collectively.

Conclusion

As always at live conferences, I gained as much from the conversations between sessions as from the talks themselves. As well as catching up with my tribe – long term, like-minded web tech friends and colleagues – I also met several new people with whom I engaged to great effect, including some who worked in higher education, design systems, product design, cloud development, content strategy, and, of course, digital accessibility. I’ve no doubt these new connections will be as fruitful and long-lasting as the old tribe.

It’s worth noting that Web Directions makes recordings of all Summit presentations available to attendees post-conference, so while I physically attended seven talks, I’ll be to view videos later of the other 78 across the seven tracks of the conference. This is done via the Conffab archive, which uses the most accessible platform for such a purpose I’ve ever encountered.

Videos have full user controls, closed captions that can be turned on or off, a rolling transcript that is synchronized to the speaker with current sections highlighted, a separate searchable transcript that can be turned on or off, control over video quality and speed, and accessible slides that are also synchronized to the presentation. These people do conference presentations right.

The Conffab library has presentations from more than 40 Web Directions conferences over the last 10+ years, and I believe it’s possible to subscribe without being a conference attendee. Part of the thinking is that people should be able to construct their own personalized conferences consisting of selected presentations.

My thanks go to the Web Directions team for organizing such a brilliant conference, and to TPGi for supporting my attendance. I’m already looking forward to the next one.